Ever since I’ve started experimenting with HyperDB I thought that once I’ve got enough servers at my disposal, and if I ever figure out how to setup MySQL replication I’d bring the two together. I believe that day has finally come: HyperDB is now deployed across over 60 sites I’m taking care of.

Ever since I’ve started experimenting with HyperDB I thought that once I’ve got enough servers at my disposal, and if I ever figure out how to setup MySQL replication I’d bring the two together. I believe that day has finally come: HyperDB is now deployed across over 60 sites I’m taking care of.

I want to share with you my configuration and some of the pitfalls I’ve come across – if it helps, great. But mainly these notes are for me to remember how I did it when the next cluster needs to be built.

My Setup

Currently I’m hosting sites with one main server running CentOS 6 and Plesk 10.4 – that’s for web and email. On another server I’m hosting my databases. This server is also running Plesk which makes replication a little bit more difficult.

I want to build a “cluster” of 4 database servers in total, two masters which replicate each other and can be used for read and write queries, and two slaves which replicate one master each. These are for read queries. Thanks to HyperDB I can decide whether I want to use my masters for write only and leave the read queries to my slaves. I could even expand on those slaves later if I wanted to.

I have considered circular multi-master replication, but that’ just giving me a headache. Imagine one going down, then all of them are out of sync. It’s got MESSY written all over. If I need more masters later, I’d rather build another cluster altogether.

At the time of writing I’m dealing with WordPress 3.3.1 and HyperDB 1.1. MySQL on my system is at version 5.1 for the masters and 5.5 for the slaves. I know this sounds dangerous, and 5.5 has some additional configuration options in my.cnf, as well as an additional database (performance_schema). But testing shows that these replicate well despite the different versions.

Before we begin

HyperDB is great, but it DOES NOT replicate your servers for you. You need to do this yourself. Once replication is in place, HyperDB will work its magic – but it won’t build a cluster for you.

First we need to setup two multi-masters, so two servers need to replicate onto each other. I’ve written how to do this in another article. Once that’s done we need to introduce one slave to Master 1, so technically Master 1 replicates onto two machines. Master 2 will currently only replicate onto Master 1 because we’ll re-introduce our current standalone database server as Slave 2. More about that later.

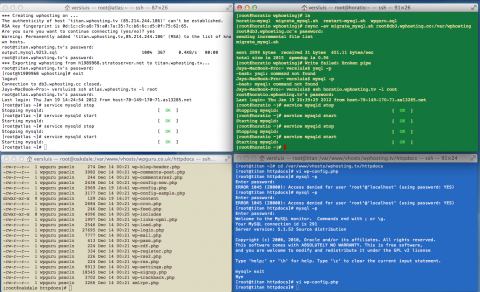

To test if this is working, I like having 4 SSH windows open at a time. Three of them are logged into my database servers, and one of them is an ad-hoc. This is what it looks like:

I like creating a database on one of the servers and check the other ones to make sure it’s been replicated properly. You do this with three easy MySQL commands:

create database aaa; show databases; drop database aaa;

These create, show all and delete database “aaa”. In an ideal world, you can create on Master 1, it should show up on both Master 2 and Slave 1. You should also be able to delete the same database from Master 2 and it should vanish from Master 1 and Slave 1.

Beware: if only ONE mistake happens, the replication on the problematic server will immediately stop replication until you fix the issue. By “mistake” I mean that if you’re dropping a database that the slave doesn’t know for example, or changing a value which does not exist. The only remedy in such cases is to stop the slave, pointing it to the synced master log position and then starting it again.

While you’re testing you should also emulate what happens when a server goes down. So switch Master 1 off by using the following shell command:

service mysqld stop

Then create a database on Master 2 and switch Master 1 back on via

service mysqld start

In an ideal world Master 1 should show your database. Slave 1 on the other hand may need another minute to catch up – depending on how often you’ve told him to reconnect to the master. Mine is set to re-connect once every minute, so grab a coffee and check again. Do it the other way round too so you’re absolutely certain that things replicate even when a server can’t be reached.

7 thoughts on “How to deploy HyperDB”